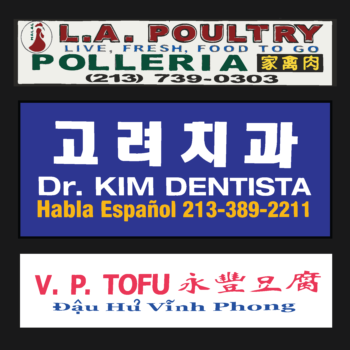

There are lots of exciting possibilities with this unique dataset. We will be able to see fascinating insights we couldn’t see before. For example, we can now map neighborhoods that have not yet been officially recognized but where communities have been publicly expressing themselves such as Little India, Little Tehran, Little Brazil, etc. Or, instead of focusing on the one official ethnic town such as “Chinatown,” we can now see there are actually 5 much larger “Chinatowns” in the LA region. And we are also finding that instead of only clustering, there are also other geographies such as a network of dispersed nodes for Greek LA. One reason we can find these places of different shapes and scales is because our data is disaggregated to the individual parcel level. We can aggregate the data into organic clusters rather than predefined aerial units such as census tracts which are often not socially meaningful units. Another reason is because we are capturing all text in the built environment, not just the official signs. Our data includes words from commercial and institutional places, and from fliers posted on windows and lightpoles. We can see how people are expressing themselves publicly in the everyday city.